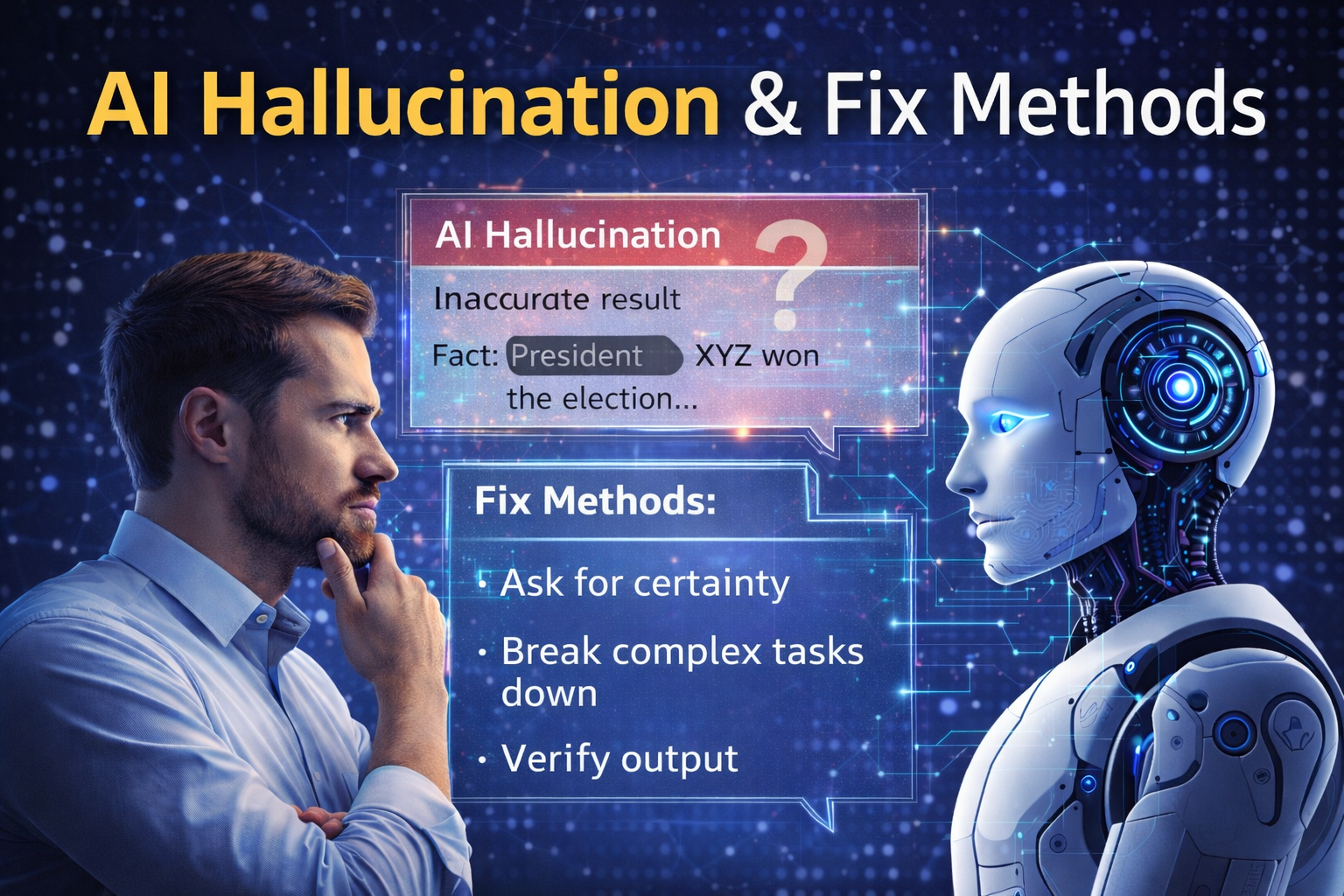

AI Hallucination & Fix Methods

AI Hallucination: Why It Happens and How to Fix It

AI sometimes gives answers that sound confident — but are completely wrong.

This isn’t a bug.

It’s called AI hallucination.

What AI hallucination really is

AI doesn’t verify facts.

It predicts what sounds right based on patterns.

When data is missing, unclear, or contradictory, AI fills the gaps.

That’s when hallucinations appear.

Common triggers

- Vague prompts

- Requests for unknown or niche information

- Overly broad questions

- Assuming AI knows your context

How to reduce hallucinations

You can’t fully eliminate hallucinations.

But you can reduce them dramatically.

Use these methods:

- Ask AI to state uncertainty explicitly

- Request sources or reasoning steps

- Break complex questions into smaller ones

- Validate outputs instead of trusting them blindly

Think of AI as a junior assistant

AI behaves like an intern.

Fast, helpful — but inexperienced.

The more guidance you give, the safer the output becomes.

Final thought

AI hallucinations don’t mean AI is unreliable.

They mean humans must stay in the loop.

AI produces answers.

Judgment still belongs to you.